ClickHouse's AgentHouse: When a Database Company Bets on AI Agents

Their internal AI handles 70% of data warehouse queries. So they acquired the chat platform and open-sourced the whole stack.

ClickHouse just made a bold move. In November 2025, they acquired LibreChat, the open-source ChatGPT alternative, and announced the "Agentic Data Stack." It's not a product announcement. It's a thesis: the future of analytics is agents talking to databases.

And they're betting the company on it.

The DWAINE Story

The acquisition didn't come from abstract strategy. It came from DWAINE.

DWAINE (Data Warehouse AI Natural Expert) is ClickHouse's internal analytics agent. It started as an experiment. Within months, it became how most of the company accesses data.

The numbers are striking:

| Metric | Value |

|--------|-------|

| Internal users | 250+ |

| Daily conversations | 50-70 |

| Daily messages | 200+ |

| Share of analytics workload | ~70% |

| Tokens processed daily | 33 million |

| DWH team pressure reduction | 50-70% |

That last number is the real story. ClickHouse has a three-person data warehouse team serving 300+ users. Before DWAINE, they were drowning in ad-hoc requests. Now, the agent handles routine questions while analysts focus on strategic work.

"What should be a 5-minute question became a 30-45 minute exercise. A single product insight could take a senior analyst an entire day to properly investigate."

DWAINE changed that equation.

The Architecture

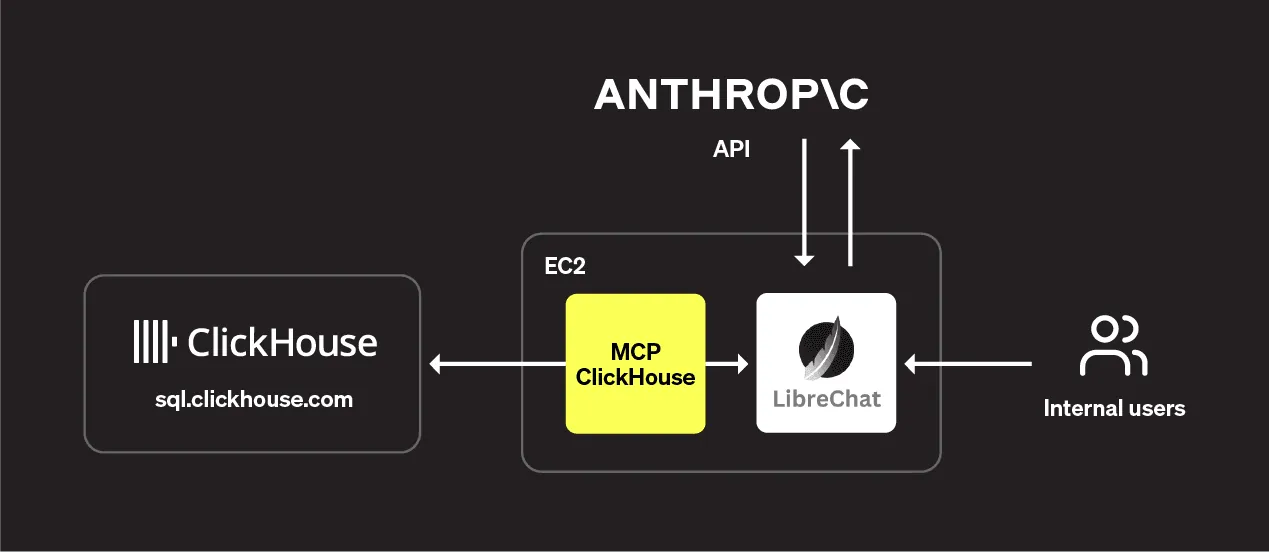

The stack that powers DWAINE is now open source. Three components:

handles the conversational interface. It's a self-hosted ChatGPT alternative with enterprise features like SSO, audit logging, and multi-LLM support. Crucially, it was one of the first platforms to fully implement MCP (Model Context Protocol) server support.

bridges the database and the LLM. It enables efficient data transfer, query optimization, context management for stateful conversations, and secure database access. The server enforces read-only queries by default.

provides sub-second query response times. This matters more than you'd think. Slow queries break the conversational illusion. When responses take seconds, it feels like talking to a human analyst. When they take minutes, it feels like waiting for a report.

The LLM layer uses Anthropic's Claude (via AWS Bedrock), chosen for its strength in understanding complex contexts and reasoning about structured data.

AgentHouse: Try It Yourself

ClickHouse didn't just build internal tools. They built AgentHouse, a public demo at llm.clickhouse.com where anyone can try agentic analytics.

The demo provides access to 37 real-world datasets:

- GitHub data (hourly updates)

- PyPI package downloads (1.3+ trillion rows)

- RubyGems (180+ billion rows)

- Stack Overflow, Hacker News, Reddit

- NYC Taxi trips, OpenSky flight data

- IMDB movie database

No account needed. No data upload required. Just ask questions in plain English.

When you type "Which GitHub repositories had the most stars last month?", here's what happens:

- LibreChat receives your question

- Claude interprets it and fetches schema metadata from the MCP server

- Claude generates an optimized SQL query

- The MCP server executes it against ClickHouse Cloud

- Claude formats the results into a conversational response

The name "AgentHouse" combines "Agent" (the LLM) with "House" (from ClickHouse). Subtle, but intentional.

The LibreChat Acquisition

Why buy an open-source chat platform?

Because LibreChat already had enterprise adoption. At Shopify, it powers "reflexive AI use" across the company:

"LibreChat powers reflexive AI use across Shopify. With near universal adoption and thousands of custom agents, teams use it to solve real problems, increase productivity, and keep the quality bar high. By connecting more than 30 internal MCP servers, it democratizes access to critical information across the company."

Shopify's approach is infrastructure-first: instead of building one-off features, they build reusable platforms. LibreChat became their AI backbone, connecting everything from Slack to internal databases to documentation systems.

ClickHouse saw this pattern and acquired the platform to accelerate it.

The Healthcare Angle

Beyond tech companies, healthcare is emerging as a key use case.

cBioPortal, the open-source cancer genomics platform used by researchers worldwide, built "cBioAgent" using the ClickHouse + LibreChat stack. The result:

"By leveraging the ClickHouse, MCP, and LibreChat stacks, we quickly delivered a prototype to cBioPortal users, enabling them to ask entirely new questions about cancer genomics and treatment pathways, get answers quickly, and explore data in ways not possible through the existing user interface."

cBioPortal v7 now runs exclusively on ClickHouse Cloud. The database that powers high-speed analytics is the same one powering natural language access to cancer research data.

What Makes This Different

We've covered many enterprise text-to-SQL implementations: Uber's QueryGPT, LinkedIn's SQL Bot, Salesforce's Horizon Agent. What makes ClickHouse's approach notable?

LibreChat is Apache-2.0. The ClickHouse MCP server is Apache-2.0. ClickHouse itself is Apache-2.0. There's no vendor lock-in at any layer.

Most AI data tools treat the database as a commodity. ClickHouse treats sub-second query performance as foundational to the agent experience. When your 2.1 PB data warehouse returns results in milliseconds, conversational analytics actually feels conversational.

Model Context Protocol is Anthropic's standard for connecting AI to external tools. By building on MCP, ClickHouse ensures their stack works with any MCP-compatible client, not just LibreChat.

DWAINE isn't a demo. It's how ClickHouse runs. 70% of internal analytics goes through an AI agent. That's real production validation.

The Guardrails

ClickHouse is refreshingly honest about limitations. Their internal guidance for DWAINE users:

"If a really important decision must be made, we ask users to ask DWAINE to write a SQL query to prove the results of his research with comprehensive comments. The user could then take this query to a traditional ad-hoc SQL tool and check DWAINE's logic."

AI handles routine questions. Humans verify high-stakes decisions. This hybrid model acknowledges that LLMs make mistakes while still capturing 70% of the productivity benefit.

They also found that LLMs amplify data quality problems:

"LLMs magnify data quality issues dramatically."

Bad metadata, stale definitions, inconsistent naming: all become more visible when an AI confidently generates wrong queries. ClickHouse responded by strengthening automated validation, data lineage tracking, and freshness indicators before rolling out DWAINE widely.

From Demo to Deployment

AgentHouse proves the core thesis works. But production deployment surfaces additional requirements that the demo environment doesn't address.

AgentHouse lives at llm.clickhouse.com. Enterprise users live in Slack, Teams, and email. The gap between "cool demo" and "daily habit" is distribution. Tools that require behavior change struggle to get adopted. Tools that appear where users already work succeed.

DWAINE works because ClickHouse invested heavily in documentation: an MDBook-based business glossary, column dictionaries, curated query examples. That's the right approach. But when definitions change, when metrics evolve, when new tables appear, who updates the context? And how do you ensure updates don't break existing queries?

Production context management increasingly looks like code: version-controlled definitions, review workflows through pull requests, testing before deployment, instant rollback when something breaks.

ClickHouse explicitly advises users to verify important decisions manually. That's appropriate caution. But it shifts the governance burden to end users. In enterprises with hundreds of users across different roles and data permissions, manual verification doesn't scale.

Row-level security, comprehensive audit trails, and approval workflows for sensitive queries aren't nice-to-haves. They're deployment requirements.

The technology works. The deployment model is still emerging. Teams building production AI analytics will need to solve these problems regardless of which database powers the backend.

The Bottom Line

ClickHouse's bet is clear: the future of analytics is conversational, and infrastructure companies should own the stack.

By acquiring LibreChat and open-sourcing the integration layer, they're positioning ClickHouse as the database for AI agents. Not just fast queries, but queries that LLMs can generate and execute reliably.

The metrics from DWAINE validate the approach:

- 70% of analytics workload shifted to AI

- 50-70% reduction in analyst burden

- 250+ users adopted within months

- Sub-second response times maintaining conversational flow

For data teams evaluating agentic analytics, AgentHouse offers a rare opportunity: try the full stack, with real data, without any setup. Visit llm.clickhouse.com and see what 37 datasets and sub-second queries can do.

The agentic data stack is no longer theoretical. It's running in production at ClickHouse, Shopify, and cBioPortal. And it's open source.

For teams ready to deploy AI analytics beyond demos: the next frontier is meeting users in Slack and Teams, managing context with git-like rigor, and building governance into the platform rather than relying on user vigilance. That's where the real work begins.

Related reading:

- Uber's QueryGPT: Multi-Agent Architecture for Enterprise Scale

- Instacart's Intent Engine: Why Fine-Tuning Beats RAG

- Pinterest's Text-to-SQL: Solving Table Discovery First

If this excites you, we'd love to hear from you. Get in touch.

Rick Radewagen

Rick is a co-founder of Dot, on a mission to make data accessible to everyone. When he's not building AI-powered analytics, you'll find him obsessing over well-arranged pixels and surprising himself by learning new languages.