Uber's Finch: The Financial Data Agent That Lives in Slack

Uber just published a deep dive into Finch, their conversational AI data agent for financial analytics. The concept is familiar: ask questions in natural language, get answers from your data warehouse. What's different is the execution.

Finch doesn't just generate SQL. It maintains context, validates outputs against "golden queries," and routes between specialized agents based on intent. And it all happens in Slack, where finance teams already work.

The Problem with Financial Data Access

At Uber's scale, financial analysts face a familiar challenge. They need answers from multiple systems: data warehouses, internal dashboards, financial platforms. Each query requires logging into different tools, writing SQL, and often waiting for engineering support.

The cost isn't just time. It's opportunity cost. When getting a number takes hours instead of seconds, fewer questions get asked. Decisions rely more on intuition, less on data.

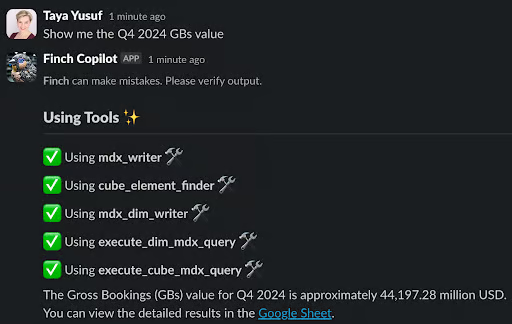

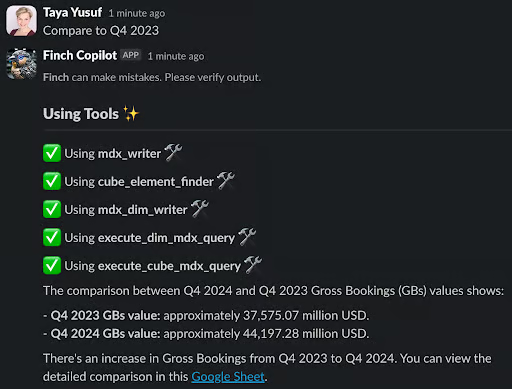

Finch changes that equation. A finance team member can now ask "What was GB value in US&C in Q4 2024?" directly in Slack and get an answer in seconds.

The Architecture That Makes It Work

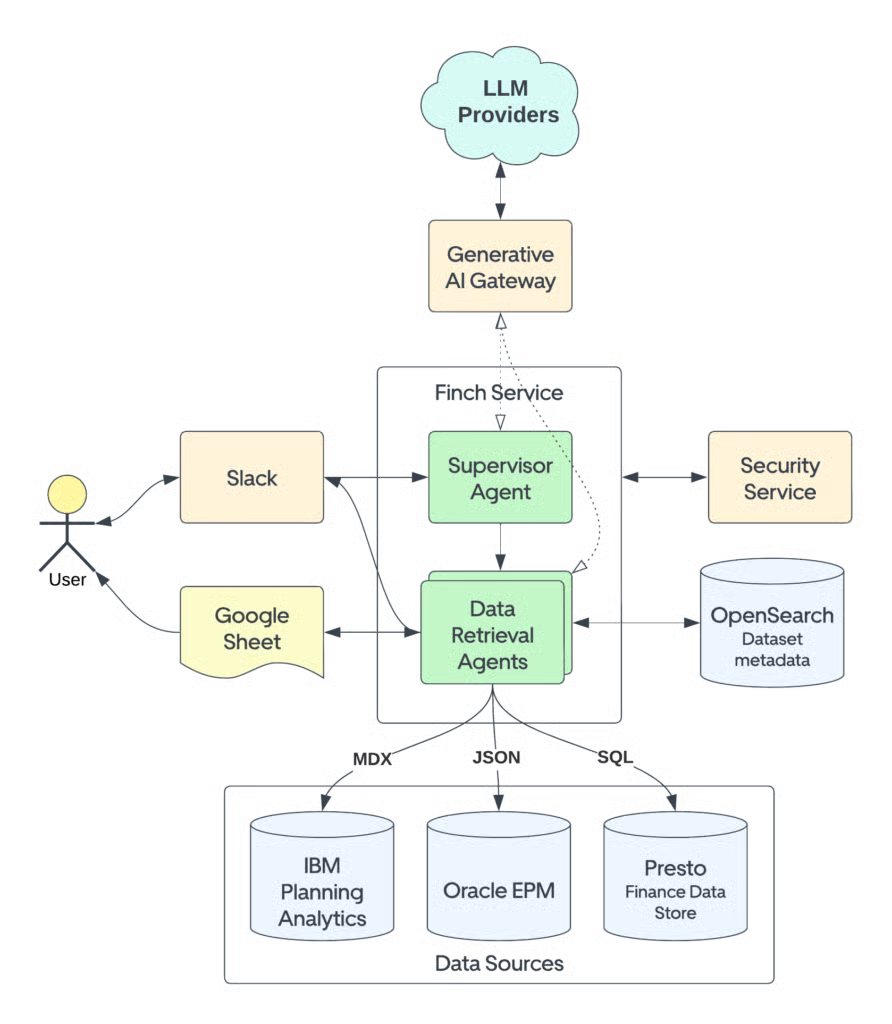

Finch is built on four key components that work together:

1. Generative AI GatewayUber accesses "a wide array of self-hosted and third-party large language models" through their internal AI Gateway. This abstraction layer means they can swap models as technology evolves without rebuilding the application.

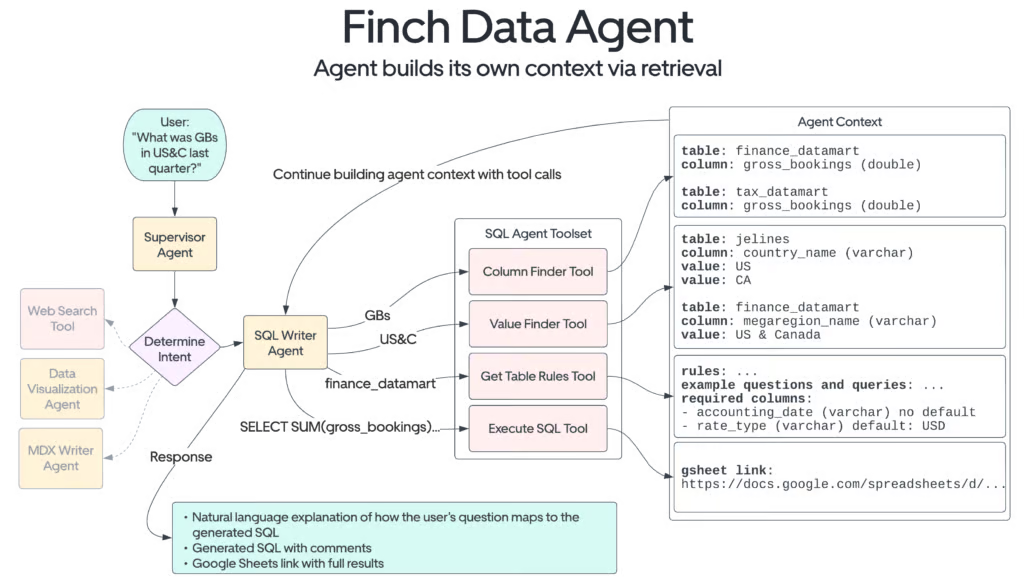

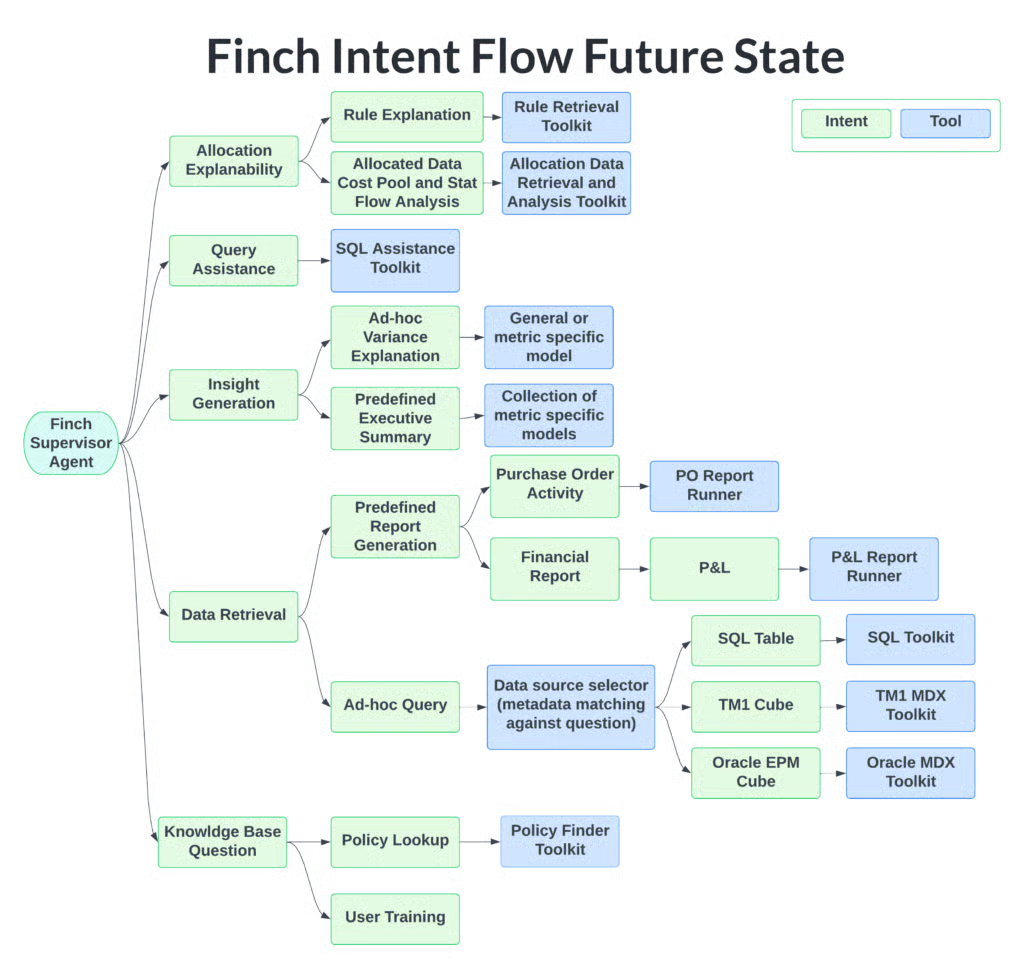

2. LangGraph for Agent OrchestrationThe system uses LangChain's LangGraph to coordinate specialized agents. A Supervisor Agent receives each query and routes it to the appropriate sub-agent based on intent: SQL Writer Agent for data retrieval, Document Reader Agent for unstructured content.

This is the novel part. Finch stores "dataset metadata, including SQL table columns, values, and natural language aliases" in OpenSearch. When a user asks about "US&C," the system fuzzy-matches this to the actual column value representing the US and Canada region.

Traditional text-to-SQL fails on WHERE clause accuracy because LLMs don't know your specific column values. By maintaining a semantic layer of aliases in OpenSearch, Finch achieves "significantly improved reliability in generating accurate WHERE clause filters compared to conventional methods."

4. Curated Data MartsRather than querying raw tables, Finch operates on "curated single-table data marts that consolidate frequently accessed financial and operational metrics." These are optimized for simplicity: no complex joins, minimal query complexity.

The User Experience

The Slack integration isn't an afterthought. Uber built a dedicated callback handler that updates status messages in real-time, so users see exactly what Finch is doing: "identifying data source," "building SQL," "executing query."

They also leverage Slack's AI Assistant APIs for suggested follow-up questions and persistent pinning of useful queries. When users need to iterate on a question, Finch maintains context across the conversation.

For large result sets, Finch exports to Google Sheets automatically, keeping the Slack experience fast while supporting deeper analysis.

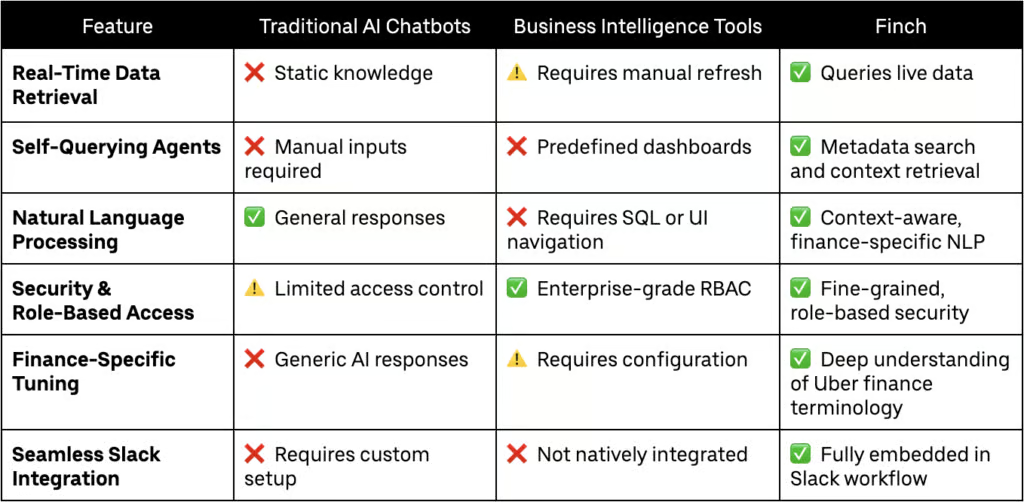

How Finch Compares to Alternatives

Uber's team published a comparison against other approaches:

The key differentiators:

- Real-time Slack updates vs. waiting for results

- Contextual follow-ups vs. starting over for each query

- Domain-specific semantic layer vs. generic SQL generation

- Human-in-the-loop validation for high-stakes queries

Quality Assurance: Golden Queries and Regression Testing

Here's where production engineering matters. Finch doesn't trust raw LLM outputs.

Their evaluation methodology includes:

- Agent accuracy evaluation: Each sub-agent is tested against expected responses. For the SQL Writer Agent, generated queries are compared against "golden queries" with known correct results.

- Supervisor routing accuracy: Testing ensures queries get routed to the right agent, avoiding intent collision.

- End-to-end validation: Simulated real-world queries run through the entire system before deployment.

- Regression testing: Historical queries are re-run before each deployment to detect accuracy drift.

This multi-layered validation is what separates production AI systems from demos.

What They're Honest About

Uber acknowledges limitations directly:

"Like all generative AI technologies at this point, it's not 100% reliable and is prone to hallucination."

Their response isn't to hide this. It's to build guardrails:

Human-in-the-loop validation: For executive-level queries (CEO/CFO reports), Finch includes a "Request validation" button that routes outputs to subject matter experts before delivery. Parallel sub-agent execution: Multiple validation paths run simultaneously to reduce latency while maintaining accuracy. Pre-fetching: Frequently accessed metrics are pre-computed for near-instant responses.The Roadmap

Finch isn't finished. Their planned improvements include:

- Expanded integrations with Uber FinTech systems

- Additional agents for forecasting, budgeting, and automated reporting

- Deeper human-in-the-loop workflows for sensitive financial data

Key Lessons for Data Teams

1. The semantic layer is the secret weapon. OpenSearch with natural language aliases for column values dramatically improves WHERE clause accuracy. This is a technique other teams should steal. 2. Curated data marts > raw tables. Simplifying the data structure before LLM consumption reduces errors and improves performance. 3. LangGraph enables sophisticated routing. The Supervisor Agent pattern lets you build specialized agents for different intents while maintaining a unified interface. 4. Real-time feedback matters. Showing users what the system is doing builds trust and reduces perceived latency. 5. Golden query validation catches drift. Regression testing against known-good queries is essential for production AI systems.The Bottom Line

Finch represents the state of the art in enterprise AI data agents. It's not just about generating SQL; it's about building a complete system for conversational data access with production-grade validation.

The patterns are transferable: semantic layer in a vector store, specialized agent routing, curated data marts, golden query validation, human-in-the-loop for high-stakes decisions.

For finance teams at Uber, queries that once took hours now take seconds. For data teams everywhere, Finch provides a blueprint for what's possible.

Related reading:- Uber's QueryGPT: Multi-Agent Architecture for Enterprise Scale

- LinkedIn's SQL Bot: 95% Satisfaction Despite 53% Accuracy

- Ramp Research: The Analyst Agent That Answers 1,800 Questions a Month

Rick Radewagen

Rick is a co-founder of Dot, on a mission to make data accessible to everyone. When he's not building AI-powered analytics, you'll find him obsessing over well-arranged pixels and surprising himself by learning new languages.